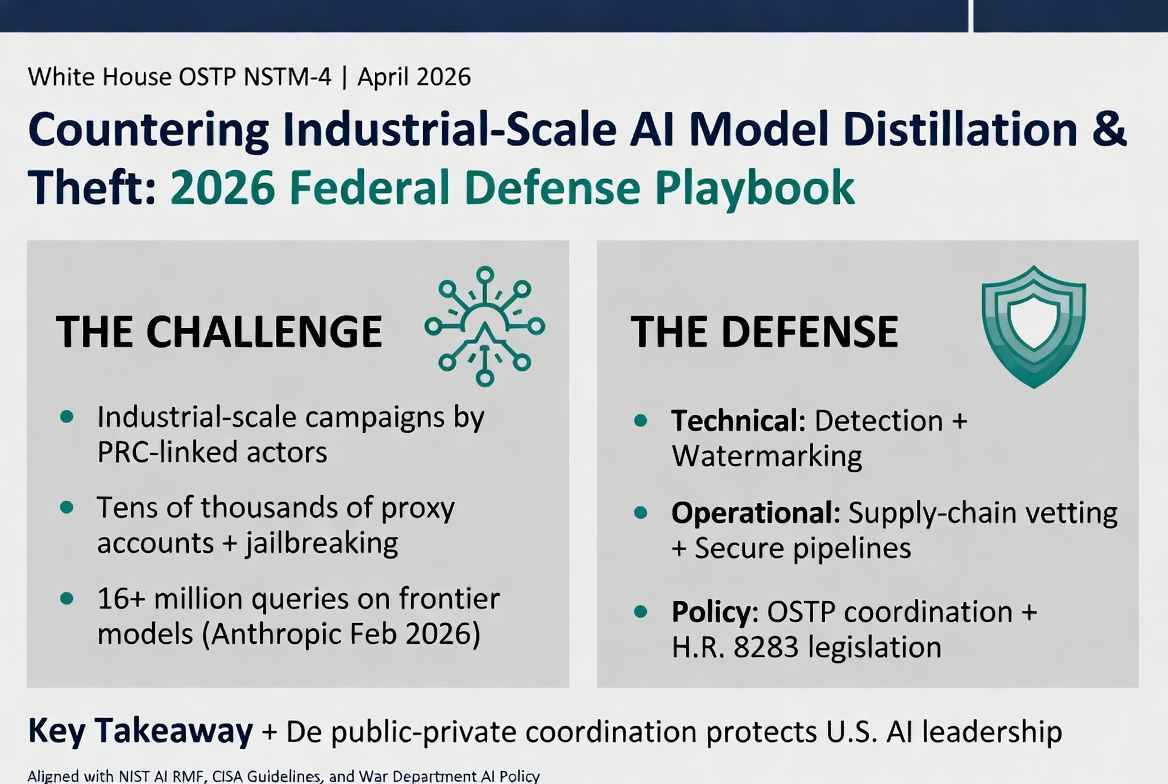

COUNTERING INDUSTRIAL-SCALE AI MODEL DISTILLATION AND THEFT

April 27, 2026: Practical Defense Strategies for Federal AI Programs in 2026

Executive Summary

On April 23, 2026, the White House Office of Science and Technology Policy (OSTP) issued Memorandum NSTM-4, “Adversarial Distillation of American AI Models,” alerting federal agencies and the private sector to coordinated, industrial-scale campaigns primarily orchestrated by entities based in the People’s Republic of China to extract capabilities from U.S. frontier AI systems through systematic distillation attacks. These operations leverage tens of thousands of proxy accounts and advanced jailbreaking techniques to harvest model outputs, enabling adversaries to train capable “student” models at a fraction of the cost while potentially stripping critical safety alignments.

This EWN provides federal AI program leaders with an actionable playbook of practical defense strategies. These measures integrate technical controls, operational protocols, interagency coordination mechanisms, and policy levers aligned with the OSTP directive, the July 2025 White House AI Action Plan, and emerging legislation such as the Deterring American AI Model Theft Act of 2026 (H.R. 8283). Implementation will safeguard U.S. innovation leadership, protect national security equities embedded in federal AI deployments, and ensure compliance with FedRAMP, NIST AI Risk Management Framework (AI RMF 1.0), and CISA AI security guidelines.

The Threat Landscape: Industrial-Scale Distillation Campaigns

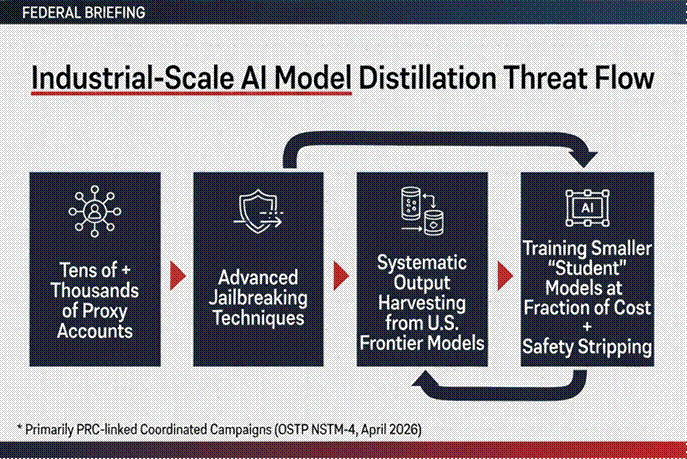

The following infographic illustrates the typical flow of these coordinated campaigns:

What Is Adversarial Distillation?

Knowledge distillation is a legitimate machine learning technique in which a smaller “student” model is trained on the outputs (logits, probabilities, or generated text) of a larger, more capable “teacher” model. In adversarial contexts, foreign actors weaponize this process at a massive scale:

• Volume & Velocity: Campaigns have generated 16+ million queries against models like Anthropic’s Claude using ~24,000 fraudulent accounts (February 2026 incidents reported by Anthropic and OpenAI).

• Evasion Tactics: Proxy networks, VPN rotation, account obfuscation, and jailbreak prompts designed to bypass safety filters and extract unaligned or proprietary reasoning traces.

• Impact: Resulting models achieve near-parity on select benchmarks at a fraction of the development cost, eroding U.S. competitive advantage and potentially proliferating unsafe or misaligned AI capabilities globally.

Federal AI programs are direct targets: agencies developing or fine-tuning frontier models (War Department, IC elements, DOE national labs) face IP exfiltration risks, while those procuring commercial models risk supply-chain compromise if vendors’ training data or weights have been indirectly influenced by distilled adversaries.

OSTP Memorandum NSTM-4: Key Directives (April 23, 2026)

The memorandum, signed by Assistant to the President for Science and Technology and Director of OSTP Michael J. Kratsios and distributed to all Executive Department and Agency heads, mandates enhanced public-private collaboration:

1. Threat Intelligence Sharing: OSTP will disseminate detailed indicators of compromise (IOCs), actor tactics, techniques, and procedures (TTPs), and campaign attributions to U.S. AI companies.

2. Best Practices Development: Joint government-industry working groups will codify technical and procedural defenses against large-scale distillation.

3. Accountability Measures: Exploration of export controls, entity-list designations, sanctions, and support for legislative tools such as H.R. 8283.

Important Distinction: The memorandum explicitly preserves lawful, transparent distillation for open-source research while condemning covert, adversarial campaigns that violate terms of service and U.S. export controls.

Practical Defense Strategies for Federal AI Programs

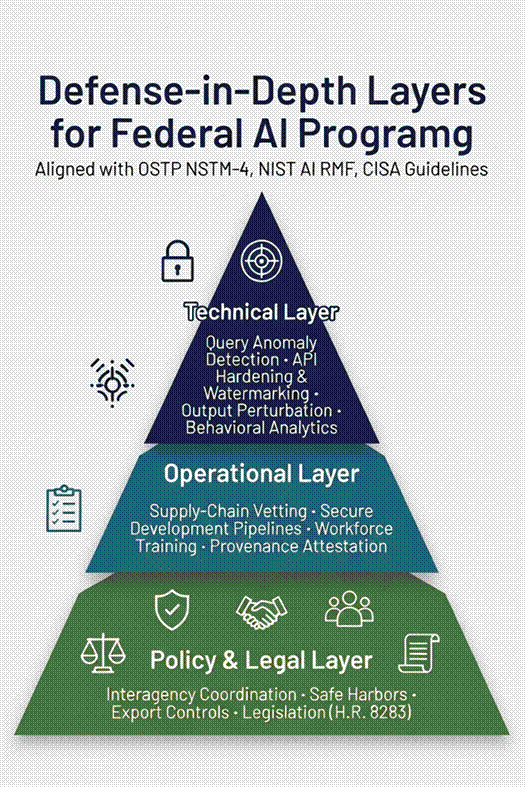

Federal AI program managers should adopt a defense-in-depth approach spanning technical, operational, and policy layers. The following infographic summarizes the layered strategy:

Detailed strategies are outlined in the table below, aligned with OSTP NSTM-4, NIST AI RMF, and CISA guidelines.

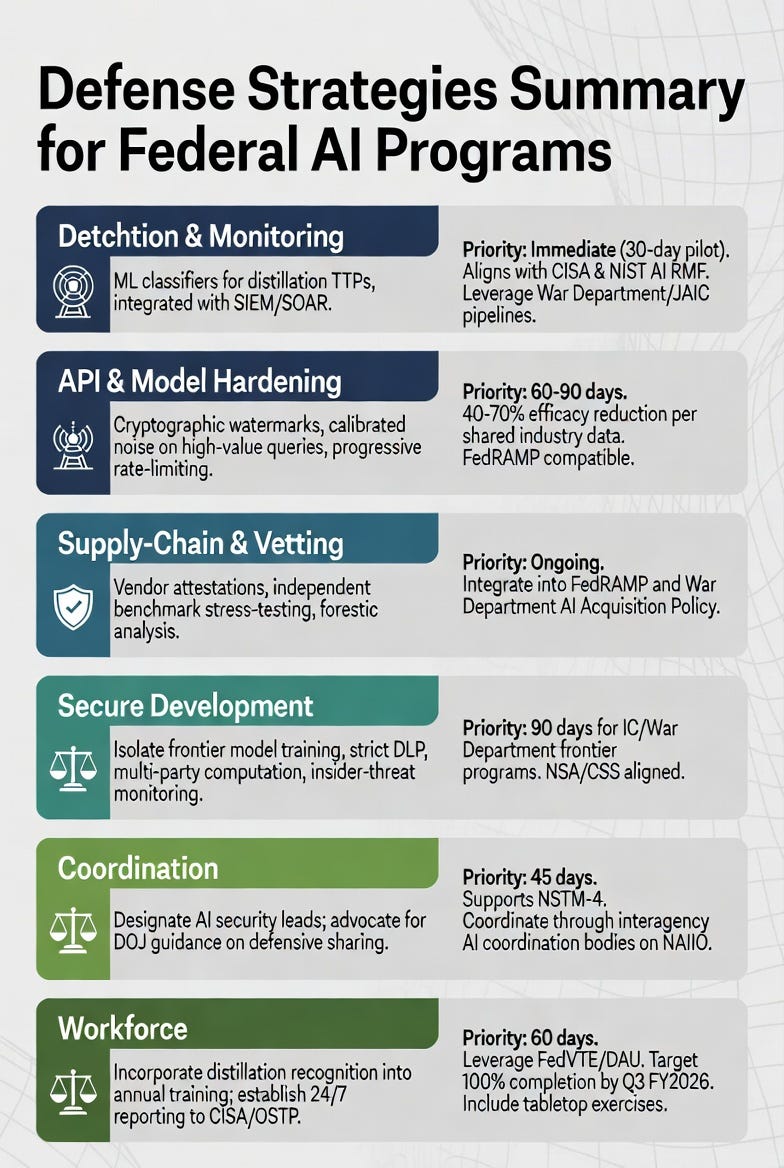

Phased Implementation Roadmap for Federal AI Programs

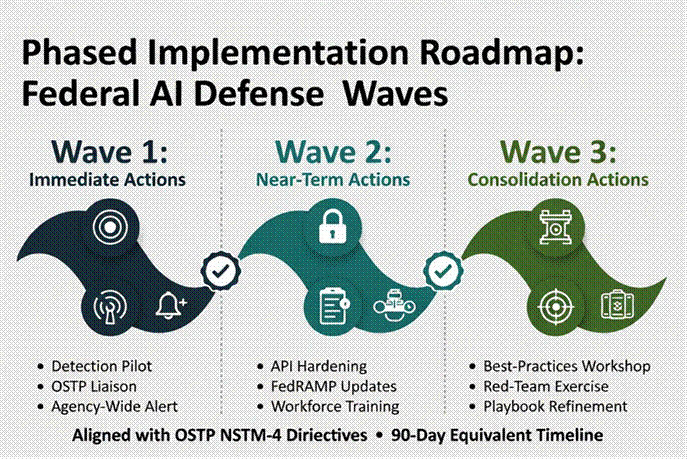

The following phased approach aligns with OSTP NSTM-4 directives:

Wave 1 (Immediate Actions): Stand up internal distillation detection pilot on all externally facing AI APIs; designate OSTP liaison; issue agency-wide alert referencing NSTM-4.

Wave 2 (Near-Term Actions): Integrate watermarking/perturbation controls into production models; update FedRAMP packages and vendor contracts with new attestation requirements; complete first round of workforce training.

Wave 3 (Consolidation Actions): Participate in inaugural OSTP-industry best-practices workshop; conduct red-team exercise simulating PRC distillation campaign; submit lessons-learned to NAIIO for government-wide playbook refinement.

Key Takeaways

4. The threat is real, ongoing, and industrial in scale—federal programs cannot treat distillation as a niche academic concern.

5. Defense is achievable through layered, standards-aligned controls that also strengthen broader AI supply-chain security.

6. Success depends on rapid public-private coordination; federal agencies must lead by example in adopting and sharing defenses.

7. Legislative and regulatory tailwinds (export controls, safe-harbor clarity, H.R. 8283) will amplify technical measures.

Call to Action

Federal AI program leaders are encouraged to review this guidance and integrate relevant strategies into their programs. For questions on technical controls or interagency coordination, contact agency OSTP points of contact or CISA’s AI Security Team (ai@cisa.dhs.gov).

References & Further Reading

• White House OSTP Memorandum NSTM-4, “Adversarial Distillation of American AI Models” (April 23, 2026)

• White House AI Action Plan (July 2025)

• NIST AI Risk Management Framework (AI RMF 1.0) & Generative AI Profile (https://www.nist.gov/itl/ai-risk-management-framework)

• CISA AI Security Guidelines & Incident Response Playbook (2025) (https://www.cisa.gov/topics/artificial-intelligence)

• House Select Committee on the CCP — Testimony on AI Model Theft (April 16, 2026)

• Deterring American AI Model Theft Act of 2026 (H.R. 8283, 119th Congress)

• Anthropic & OpenAI Public Disclosures on PRC Distillation Campaigns (February–April 2026)

The Exchange Weekly is a production of Metora Solutions LLC a Service Disabled Veteran Owned Small Business. Every effort is made to keep details accurate as of publication time, but readers should always confirm time-sensitive items such as policy changes, budget figures, and timelines with official documents and briefings. This is not legal, investment, procurement, security, compliance, or technical advice. Always validate with primary sources before action. All rights reserved. Copyright Metora Solutions LLC 2026.

This update was assembled using a mix of human editorial judgment, public records, and reputable national and sector-specific news sources, with help from artificial intelligence tools to summarize and organize information. All information is drawn from publicly available sources listed above.

All original content, formatting, and presentation are copyright 2026 Metora Solutions LLC, all rights reserved. For more information about our work and other projects, drop us a note at info@metorasolutions.com