Mastering Federal AI Evaluation and Procurement: GSA-NIST Partnership Delivers the Playbook Agencies Need Now

GSA and NIST’s new collaboration equips federal leaders with standardized testing, benchmarks, and procurement tools to accelerate secure AI adoption through the USAi platform.

Executive Summary

Federal executives face a clear choice this week. Either navigate AI procurement with yesterday’s fragmented approaches or leverage the fresh GSA-NIST partnership to evaluate, test, and acquire systems with confidence. The March 18, 2026, memorandum of understanding between the General Services Administration and NIST’s Center for AI Standards and Innovation (CAISI) directly addresses the evaluation gaps that have slowed mission-critical deployments. It strengthens USAi, GSA’s secure government-wide AI platform, with rigorous, workflow-ready measurement science.

This partnership arrives at the precise moment when agencies must scale AI while meeting OMB acquisition mandates and White House priorities. Early signals from the USAi Console already show agencies gaining side-by-side model comparisons and real-time performance telemetry. The new CAISI collaboration adds standardized benchmarks, pre-deployment checklists, and post-deployment monitoring tools. The result is faster time-to-value, lower duplication costs, and measurable risk reduction.

For C-suite leaders, CIOs, CISOs, CTOs, IT managers, and acquisition professionals, the message is urgent. The tools to move from experimentation to enterprise deployment now exist inside a single, FedRAMP-authorized environment. Those who master them this quarter will lead their agencies in responsible AI scale. Those who wait risk procurement delays, compliance gaps, and missed opportunities under the America’s AI Action Plan.

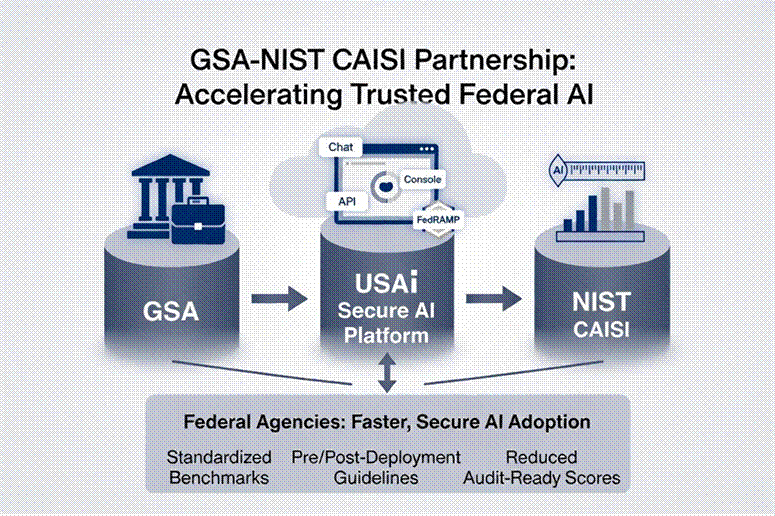

Figure 1: GSA-NIST CAISI Partnership — Accelerating Trusted Federal AI Adoption

Background on the GSA-NIST Partnership and USAi Platform

On March 18, 2026, GSA Administrator Edward C. Forst and Acting NIST Director Craig Burkhardt announced the joint effort to strengthen federal AI evaluation science. The MOU positions CAISI’s measurement expertise inside USAi, the secure generative AI platform GSA launched in August 2025. USAi operates as a centralized procurement toolbox and experimentation environment. Agencies access leading American AI models through a unified Chat interface, standardized API framework, and Console for evaluation.

USAi already serves approximately 15 agencies as of early April 2026. It delivers real-time metrics on model performance, safety telemetry, and bias indicators. The platform integrates models from providers that meet strict FedRAMP standards while maintaining full data sovereignty and privacy controls. Agencies use the Console to run standardized test suites, compare outputs side-by-side, and export evaluation data for internal reviews or audits.

The partnership builds directly on this foundation. CAISI will supply tooling and methodological guidance so GSA can evaluate advanced models, select and interpret benchmarks, and perform hands-on testing inside actual federal workflows. GSA and NIST will jointly produce practical resources: evaluation guidelines and checklists that every agency can adopt without reinventing the wheel. The work explicitly supports the White House’s America’s AI Action Plan, which calls for stronger evaluation practices and accelerated government-wide AI adoption.

This is not another pilot. It is an operational infrastructure designed for procurement decisions that happen today.

Key Evaluation Frameworks and Benchmarks Being Developed

CAISI brings gold-standard measurement science developed through ongoing work with industry leaders and other government partners. Under the MOU, CAISI and GSA are creating robust methodologies to evaluate three core dimensions inside USAi workflows: performance, security, and mission-specific functionality.

Expect these deliverables in the coming months:

• Standardized benchmarks for frontier models, selected and interpreted for federal use cases.

• Pre-deployment assessment guidelines that agencies can run directly in the USAi Console.

• Post-deployment performance measurement tools aligned to each agency’s unique mission and operations.

• Hands-on testing protocols that incorporate real federal worker workflows rather than generic lab conditions.

These frameworks will not remain theoretical. GSA and NIST have committed to producing clear evaluation guidelines and checklists that acquisition teams can insert into solicitations, and that program offices can use during pilot phases. Early indications point to side-by-side model scoring on accuracy, reliability, adversarial robustness, and bias metrics tailored to government contexts. Agencies already inside USAi gain an immediate advantage. The Console already captures model outputs and safety telemetry. The new CAISI resources will turn that raw data into decision-grade scores procurement officers can defend in source selection.

Procurement Implications and New Contract Requirements

The partnership directly reshapes how agencies buy AI. USAi now functions as the government’s preferred evaluation and procurement gateway. Vendors whose models pass the forthcoming CAISI-backed benchmarks gain a faster path to award on GSA schedules and other vehicles.

Related developments reinforce the shift. GSA’s draft clause GSAR 552.239-7001, proposed in March 2026, introduces new safeguarding requirements for AI systems used in contract performance. Contractors must disclose AI systems, maintain documentation consistent with NIST AI Risk Management Framework principles, grant the government audit rights, and comply with incident reporting. The clause also emphasizes American AI preference and data-handling controls.

For system integrators and service providers, the implication is clear. Proposals that reference USAi Console evaluations and CAISI methodologies will stand out in technical evaluations. Agencies will increasingly require evidence of benchmark performance before awarding task orders. Contracting officers gain standardized language and checklists to verify compliance rather than negotiating evaluation criteria ad hoc.

Step-by-Step Playbook for Agencies

Agencies can begin implementation immediately using existing USAi capabilities while the new CAISI resources roll out. Here is the practical sequence:

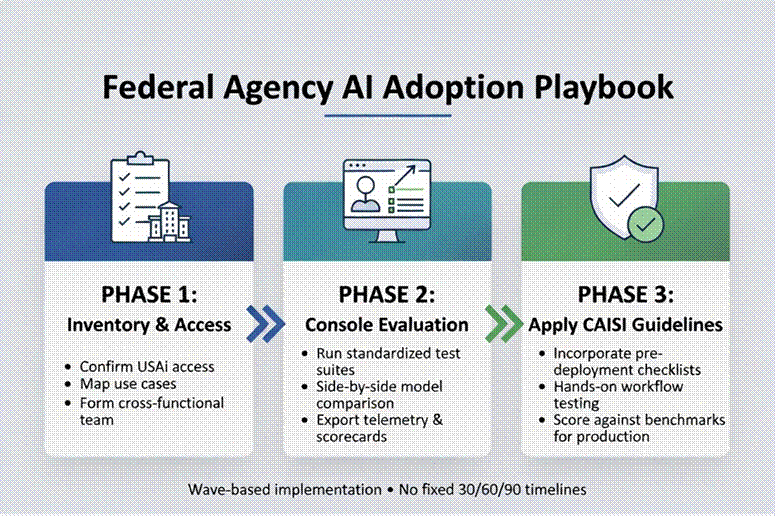

Figure 2: Federal Agency AI Adoption Playbook — Wave-based Implementation

Decision Tree for Procurement

• Does the use case require frontier capabilities? → Route through USAi Console + CAISI benchmarks.

• Is the model already evaluated in USAi? → Fast-track via existing schedule.

• Does it fail key safety or bias thresholds? → Require vendor remediation or select an alternative.

Key questions to ask vendors:

• Which USAi Console evaluations have you completed?

• Can you provide CAISI-aligned benchmark scores for our mission workflow?

• How will you support post-deployment monitoring inside USAi?

• What documentation exists for NIST AI RMF compliance?

Risk Management and Governance Best Practices

The partnership embeds risk management at the point of procurement rather than after deployment. CAISI’s measurement science directly addresses the three risks agencies cite most often: performance gaps in real workflows, security vulnerabilities, and mission misalignment.

Best practices now include:

• Mandatory USAi Console evaluation before any new AI system enters production.

• Use of CAISI-provided checklists to document pre-deployment assessments.

• Integration of post-deployment telemetry into agency governance dashboards.

• Alignment of all contract language with the forthcoming GSAR AI clause and OMB M-25-22 acquisition guidance.

CISOs gain concrete tools to verify adversarial robustness. CTOs receive workflow-specific performance data. Acquisition leaders can defend source selections with standardized, auditable scores.

Case Studies or Early Adopter Insights (Verified Only)

As of early April 2026, approximately 15 agencies are actively using USAi. While specific named case studies remain under internal review, GSA’s own AI use-case inventory demonstrates the platform’s value. GSAi, the enterprise chatbot, and the USAi Console itself serve as live examples of secure, shared-service deployment that reduces individual agency build costs and standardizes evaluation.

Early users report faster model selection cycles and clearer documentation for compliance reviews. The Console’s side-by-side comparison capability has already surfaced performance differences that traditional RFIs missed.

Future Outlook Tied to Advancing American AI Act and OMB Guidance

The GSA-NIST partnership forms a cornerstone of the broader America’s AI Action Plan released in July 2025. That plan, reinforced by OMB memoranda M-25-21 (governance and public trust) and M-25-22 (efficient acquisition), charges agencies with accelerating AI while maintaining rigorous evaluation standards.

Look ahead: CAISI will continue publishing guidelines and resources so agencies can conduct their own evaluations. USAi will expand model coverage and deepen integration with CAISI benchmarks. Future OMB updates will likely reference these tools as the preferred method for meeting acquisition and risk-management requirements.

The trajectory is clear. Standardized evaluation inside a secure government platform becomes the default path for federal AI procurement. Agencies that embed these practices now will lead the next wave of mission outcomes.

Action Items for CIOs, CTOs, and Acquisition Leaders

1. Schedule a USAi Console demonstration for your leadership team this week.

2. Map your top three 2026 AI initiatives to USAi evaluation workflows.

3. Update acquisition templates to require CAISI-aligned benchmark evidence once released.

4. Brief your contracting officers on the forthcoming evaluation guidelines and draft GSAR clause.

5. Establish a quarterly review cadence that incorporates USAi telemetry into your AI governance dashboard.

Master this framework now, and your agency gains measurable speed, security, and competitive advantage in the federal AI marketplace.

Closing Perspective

The GSA-NIST partnership and USAi platform mark the moment when federal AI moves from aspiration to disciplined execution. Executives who treat evaluation as a procurement discipline rather than an afterthought will deliver results that justify the investment. The infrastructure is built. The tools are arriving. The only remaining variable is execution speed.

DISCLAIMER & COPYRIGHT NOTICE

The Exchange Daily and Weekly deliver verified public-source intelligence for executive decision-makers. All information is from publicly available sources. No information is classified or proprietary. Content is for informational purposes only.

The Exchange Daily and Weekly are productions of Metora Solutions LLC. Metora is both a HUBZone and a Service Disabled Veteran Owned Small Business.

Every effort is made to keep details accurate as of publication time, but readers should always confirm time-sensitive items such as policy changes, budget figures, and timelines with official documents and briefings. This is not legal, investment, procurement, security, compliance, or technical advice. Always validate with primary sources before action. All rights reserved. Copyright Metora Solutions LLC 2026.

Sources

GSA Press Release: “GSA and NIST Partner to Boost AI Evaluation Science in Federal Procurement,” March 18, 2026.

https://www.gsa.gov/about-us/newsroom/news-releases/gsa-and-nist-partner-to-boost-ai-evaluation-science-in-federal-procurement-03182026

NIST Announcement: “CAISI signs MOU with GSA to boost AI evaluation science in federal procurement through USAi,” March 18, 2026.

https://www.nist.gov/news-events/news/2026/03/caisi-signs-mou-gsa-boost-ai-evaluation-science-federal-procurement-through

USAi Platform Official Site. https://www.usai.gov (accessed May 4, 2026)

White House. America’s AI Action Plan, July 2025.

https://www.whitehouse.gov/wp-content/uploads/2025/07/Americas-AI-Action-Plan.pdf

GSA Draft Clause GSAR 552.239-7001 references (proposed March 2026) drawn from official proposal documents.

GSA 2025 AI Use Cases Inventory (updated April 2026). https://www.gsa.gov/artificial-intelligence/2025-gsa-ai-use-cases

All links verified live as of May 4, 2026. Additional context cross-referenced with Exchange Daily coverage of ongoing federal AI procurement acceleration.